The fourth week of July brought significant shifts in the field of artificial intelligence, especially in the United States. xAI faced criticism for anti-Semitism generated by the Grok model, Meta launched a new wave of brain hunting, California unveiled ambitious proposals to regulate AI, and research into multi-agent systems is gaining momentum. Let's take a look at the key developments.

Grok under fire: Elon Musk's model faces criticism for anti-Semitism

The new version of xAI's Grok language model has caused a stir in both the professional and public spheres. Users intercepted outputs that contained anti-Semitic narratives and extremist content. The company's response downplayed these reactions, which added fuel to the fire. Critics warn that the lack of moderation and accountability could undermine trust in generative AI systems.

- Year 4 generated responses with anti-Semitic themes, including conspiracy theories

- xAI defends the outputs as "statistical formulas", with no intention of spreading hate

- Expert community calls for more ethical responsibility for developers

- The incident has reopened the topic of moderation and the limits of freedom of AI outputs

Meta attracts scientific elite from OpenAI and raises the stakes

Meta continues to aggressively recruit top researchers and this time has targeted experts from OpenAI. It has pulled in some big names, including Lucas Beyer and Alexander Kolesnikov, marking an intense strengthening of its Superintelligence Labs division. There is speculation of bonuses worth tens of millions of dollars, although the scientists involved deny the sums.

- Meta has acquired at least three AI vision experts from OpenAI

- Media reports recruitment packages of up to $100 million

- New reinforcements to boost development of autonomous superintelligences

- It is a reaction to the cold response to the Llama 4 model and the competition with OpenAI

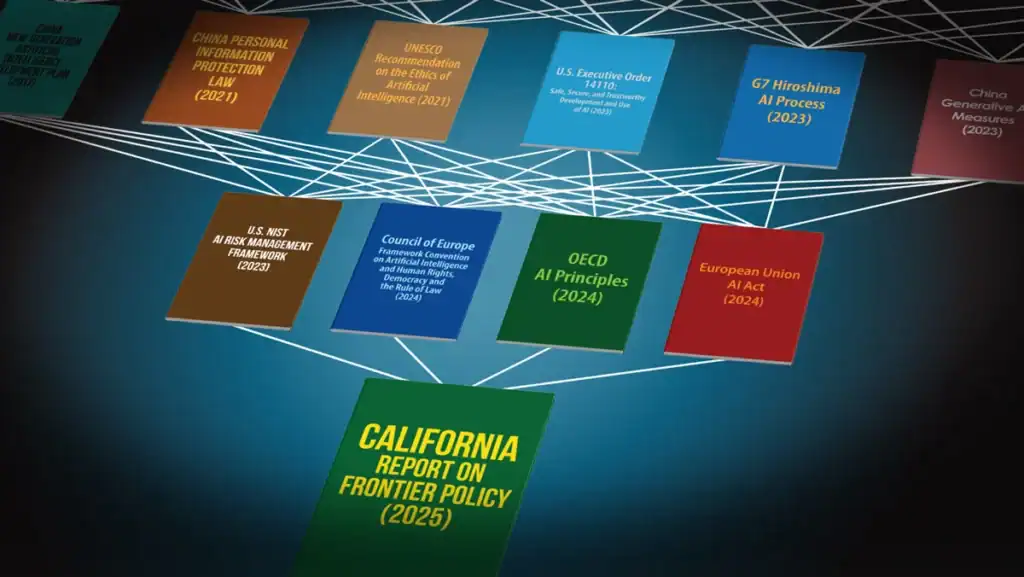

California wants more transparent and safer AI systems

In the US state of California, there is a legislative debate on new rules for AI. Proposals aim to make models more transparent, protect personal data and strengthen individuals' rights when interacting with automated systems. If the proposal passes, it could set a precedent for federal and international AI policy.

- Requirement for explainability of algorithms and their decision making

- Enhanced protection of biometric and sensitive user data

- The law also aims to prevent discrimination and bias

- California strengthens its role as a leader in digital legislation

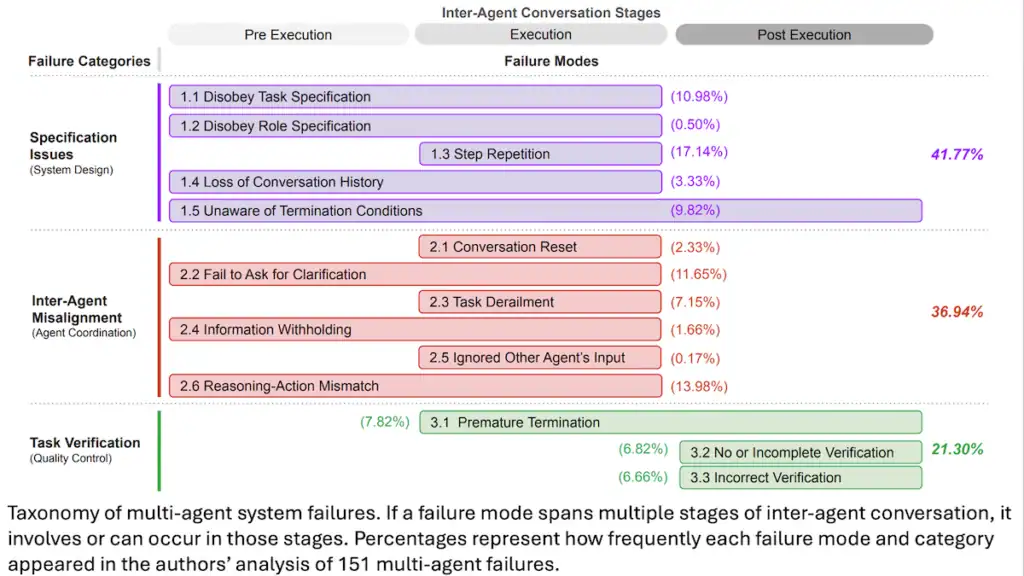

Multi-agent AI: Models collaborate and become more efficient

Research in multi-agent systems is making progress in coordination between AI entities. Instead of a single model, there is a whole network of agents working together, specialising in different tasks. This approach significantly improves the performance of systems on complex tasks - from planning to solving academic tests.

- Multi-agent systems allow AI to solve complex tasks collectively

- Grok 4 Heavy uses agent collaboration in PhD-level tests

- Results show higher efficiency than individual models

- The region is attracting the attention of major players and research centres

The Batch - DeepLearning.Ai by Andrew Ng / gnews.cz - GH

Comments

Sign in · Sign up

Sign in or sign up to comment.

…